Troubleshoot the logs with docker-compose logs -f. If metrics do not appear after several minutes, check your work for typos, and make sure that both prometheus and node-exporter containers are running with the docker-compose ps command. You can also confirm ingestion by navigating to the billing dashboard.

If you cannot see metrics in the dropdown, metrics are not being ingested. Recall that we set the job_name to node in our prometheus.yml configuration file. Use the Metrics browser to find the node_disk_io_now metric, then click on the job label and node label value. This will take you to the Explore view:Īt the top of the page, use the dropdown menu to select your Prometheus data source. In this step, you’ll query your Prometheus metrics from Grafana Cloud.Ĭlick Explore in the left-side menu to start. Step 3: Verify that metrics are being ingested You can now move on to querying these metrics from Grafana Cloud. Node-exporter | level=info ts=T21:33:36.852Z caller=tls_config.go:191 msg="TLS is disabled." http2=false Node-exporter | level=info ts=T21:33:36.852Z caller=node_exporter.go:199 msg="Listening on" address=:9100 Node-exporter | level=info ts=T21:33:36.852Z caller=node_exporter.go:115 collector=zfs Node-exporter | level=info ts=T21:33:36.852Z caller=node_exporter.go:115 collector=xfs Node-exporter | level=info ts=T21:33:36.852Z caller=node_exporter.go:115 collector=vmstat remote_write: Configuration for Prometheus to send scraped metrics to a remote endpoint.Ĭreate a Prometheus configuration file named prometheus.yml in the same directory as docker-compose.yml with the following:.In this example, we set the scrape_interval for scraping metrics from configured jobs to 15 seconds. global: Global Prometheus config defaults.In this step, you’ll configure Prometheus to scrape node-exporter metrics and ship them to Grafana Cloud. Step 2: Create the Prometheus configuration file In the next step, we’ll create the Prometheus configuration file, which Compose will read from.

Docker Compose will create this directory after starting the prometheus container. The prometheus service persists its data to a local directory on the host at. '=/etc/prometheus/consoles'įor the node-exporter service, we mount some necessary paths from the host into the container in :ro or read-only mode: '-config.file=/etc/prometheus/prometheus.yml' prometheus.yml:/etc/prometheus/prometheus.yml

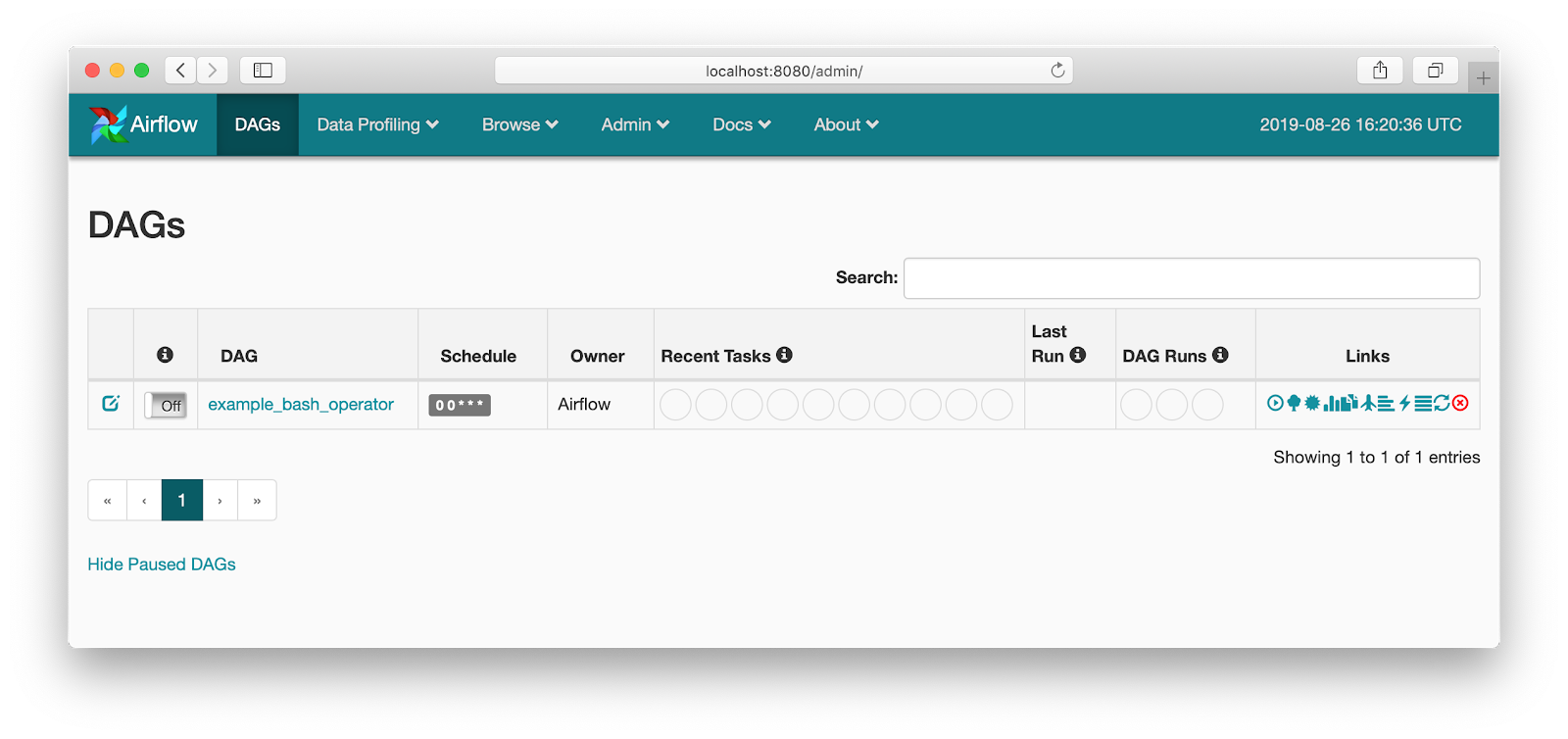

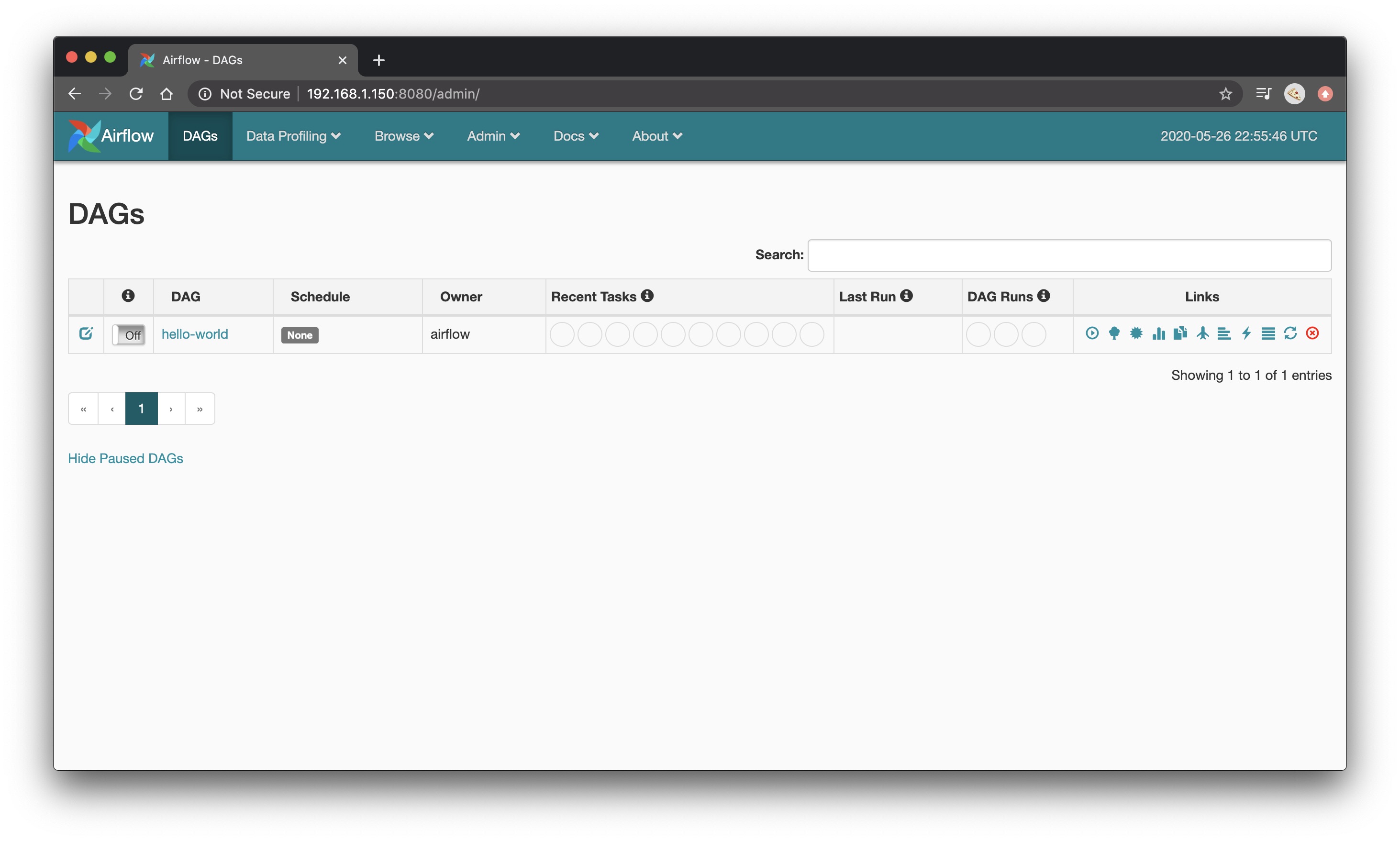

Open a file called docker-compose.yml in your favorite editor and paste in the following: In this step, you’ll create a docker-compose.yml file which will define our prometheus and node-exporter services, as well as our monitoring bridge network. Docker and Docker Compose installed on your Linux machine.A Grafana Cloud API key with the Metrics Publisher role.To create an account, please see Grafana Cloud and click on Start for free. Prerequisitesīefore you begin you should have the following available: You’ll then install a preconfigured dashboard or create your own to visualize these system metrics. This guide will walk you through the process of setting up these tools to monitor your Linux host effectively.You’ll mount the relevant host directories into the Node Exporter and Prometheus containers, and configure Prometheus to scrape Node Exporter metrics and push them to Grafana Cloud. In combination, these tools allow you to collect detailed metrics from your Linux host, store them efficiently, and visualize them in a Grafana dashboard. It uses YAML files to configure the application’s services and performs the creation and start-up process of all the containers with a single command. Docker Compose is a tool for defining and managing multi-container Docker applications. In this guide, you’ll learn how to run Prometheus and Node Exporter as Docker containers on a Linux machine, with the containers managed by Docker Compose. Grafana Cloud Monitoring a Linux host with Prometheus, Node Exporter, and Docker Compose

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed